Quick Summary: Structural edits usually beat full rewrites. The biggest wins for AI search come from answer-first openings, named-source citations, and clean schema markup.

Most content teams have heard some version of the advice: write clearly, use headings that match real questions, add citations, and be specific.

What most guidance skips is the order of operations: which pages to touch first, what to change, and how to confirm the work is doing anything useful.

This article focuses on that sequence.

Audit Your Existing Pages Before Rewriting Anything

Before editing a single word, answer two questions: which pages are already being accessed by AI crawlers, and which of those are turning that access into citations or visits?

A page that gets crawled but never cited has a structural problem, while a page that gets cited but drives no visits has a different one. A page that neither crawls nor cites is invisible to AI retrieval systems.

Those are three different problems requiring three different fixes. Conflating them leads to wasted rewrites.

Start with a small set: the ten to twenty pages that already matter most to the business, including product pages, category explainers, and high-intent guides.

Confirm each has a stable published URL and a clean crawl record. Then check citation activity and AI-origin referral data separately before deciding what to rewrite.

If you do not have that measurement system yet, build it first. Optimizing pages you cannot measure is guesswork.

What AI Systems Actually Need From a Page

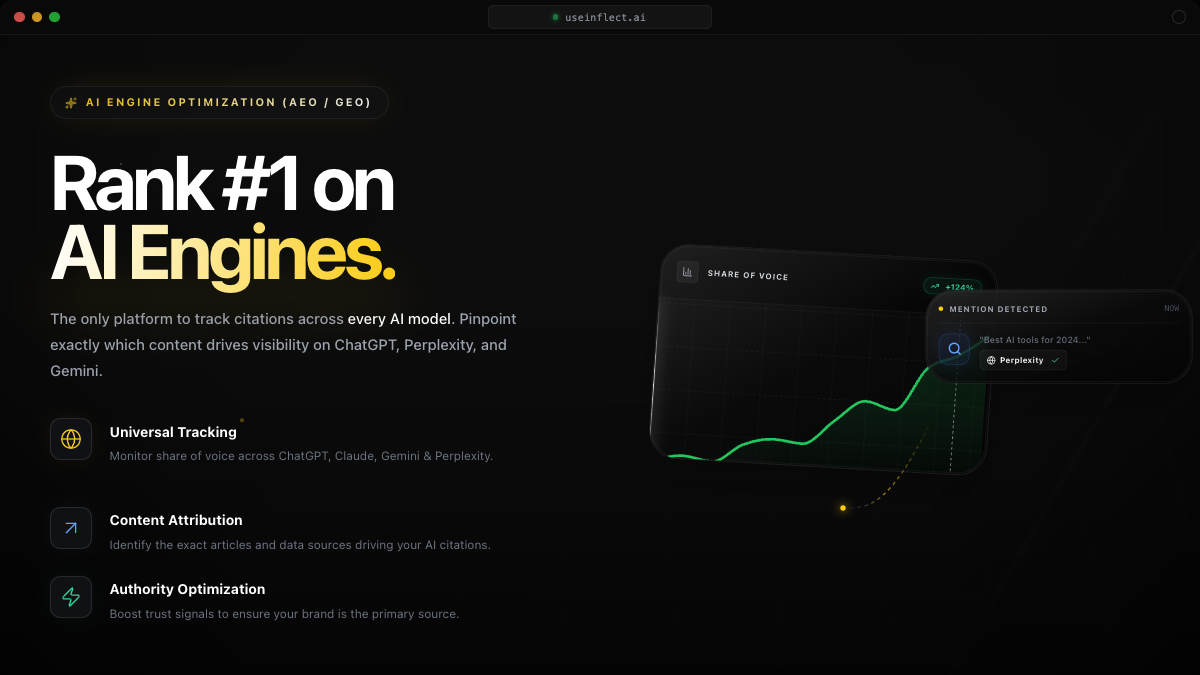

AI search engines like ChatGPT, Perplexity, and Google AI Overviews all do roughly the same job. They need to find the page, extract a useful answer, trust the source enough to cite it, and present that citation in a way that helps the user.

Perplexity's documentation on how it works says it prioritizes authoritative, reliable sources and filters for content that directly answers the query. In practice, that rewards pages that state the answer clearly and show obvious credibility signals such as named sources, supporting links, and organizational identity.

Google's guidance on structured data documents how machine-readable markup helps its systems understand what a page is about, what type of content it contains, and how it relates to adjacent entities.

A page with buried definitions, vague headings, or weak entity signals may still read well to a person, but it can still fail one of those extraction or trust checks.

The Changes That Most Reliably Improve Citability

In late 2023, Aggarwal et al. published (GEO: Generative Engine Optimization), a study on which content features improved citation rates across multiple AI search engines. The strongest changes were:

- Adding statistics and specific data points: improved GEO performance by about 37% in their experiments

- Adding quotations from authoritative sources: improved performance by about 34%

- Citing relevant sources explicitly: improved performance by about 14%

- Improving fluency and readability: produced consistent gains across engine types

The pattern is not random. AI systems respond to credibility signals, so a page that cites its claims, states specific numbers, and links to named sources is easier to reuse than one that makes the same points in vague language.

The three most useful edits on an underperforming page are:

- Add or surface specific data points with named sources

- Add explicit inline source links where the claims appear, not just a bibliography at the end

- Rewrite vague claims into concrete, quotable statements

How to Rewrite an Opening Section

The opening section is where extraction usually succeeds or fails. A model reading your page needs to identify the answer quickly, because if the answer is buried under scene-setting, it may be paraphrased poorly or skipped.

The practical test: can the first paragraph stand alone as a quotable answer to the implied question in your heading?

Before:

"AI search has changed how people find information online. Content teams are adapting to a new set of challenges as they work to stay visible in this evolving landscape."

That version is two sentences of setup with nothing citable in them.

After:

"To appear in AI-generated answers, a page needs to state its answer in the first two sentences, support it with a named source, and give the model a sentence it can quote without distorting the meaning."

That version states the requirement, ties it to an outcome, and gives the model something it can excerpt cleanly.

The rewrite rule: lead with the answer, not the context.

Adding Evidence and Citations to Existing Content

Most B2B content pages make claims without named sources, and that is the single most fixable structural gap.

The standard to aim for is simple: every meaningful factual claim should name the study, report, platform, or document it comes from, or be framed clearly as the author's or company's own analysis.

Unattributed claims are harder for AI systems to reuse without raising hallucination risk. A named, linked source gives the model a verification path, which is one reason pages with explicit citations tend to perform better in retrieval contexts.

The practical edit:

- Scan your page for claims that lack attribution.

- For each one, either find a source and link it inline or reframe it as company analysis.

- Rebuild the bibliography using linked titles, not bare URLs.

A bare URL is hard to parse and easy to misread. A linked title with a brief descriptor is both human-readable and machine-parseable.

Schema Markup: What Actually Matters

Schema is not magic, and adding JSON-LD to a structurally weak page does not make it more citable on its own.

Schema still matters because it solves specific problems that affect AI retrieval:

- Entity disambiguation: if your page is about a person, organization, product, or event, schema helps systems confirm the right entity

- Page type classification:

BlogPosting,FAQPage,HowTo, andArticleschemas signal what kind of content this is - FAQ structured data: exposes individual question-and-answer pairs to AI Overviews and similar surfaces as clean extractable units

Google's structured data guidance identifies FAQ, HowTo, and Article markup as the most commonly supported types for informational content.

For a GEO-focused blog article, the minimum useful schema set is:

BlogPostingwithheadline,datePublished,dateModified,author, andpublisherFAQPagecovering any FAQ sectionBreadcrumbListfor navigation context

Anything beyond that is a smaller, second-order optimization.

Crawl Access: The Prerequisite Most Teams Skip

Structure and schema are irrelevant if the relevant retrieval systems cannot access the page in the first place.

Google's robots.txt documentation confirms that Googlebot respects Disallow directives. AI crawlers from other platforms follow similar conventions.

The access checklist before optimizing a page is straightforward:

- Is

Disallowblocking the URL pattern for any relevant bot? - Does the page return a 200 status for the user-agent strings that matter?

- Is the canonical URL the one you expect to be indexed?

- Does the page rely on JavaScript rendering that may not be accessible to all crawlers?

A page that passes structural review but fails a crawl test is invisible to AI retrieval systems regardless of content quality.

How to Sequence the Work Across a Page Set

The ordering question matters because trying to optimize every page at once spreads effort too thin and makes it impossible to attribute what moved.

A workable sequence:

Week 1: Pick the 5 most commercially important pages. Confirm crawl access and citation visibility for each. Identify the 2 or 3 with the highest citation potential.

Week 2: Run the opening rewrite and citation audit on those 2 or 3 pages. Ship the changes. Record the revision date and the specific change made.

Weeks 3 and 4: Monitor crawler revisits, citation movement, and AI-origin referral traffic for the edited pages. Compare against the unedited pages in your set.

Ongoing: Work through the remaining pages in descending order of commercial importance.

That cadence gives you a usable link between the change and the result. When citation rate changes, you have a record of what shipped and when. That gives you the input you need for attribution.

If you need the surrounding commercial and technical context, use the pricing page to scope the workflow, the technical manifesto to align on product direction, and the broader blog for adjacent implementation guides.

How to Confirm Changes Are Working

A content change is not complete until you know whether it moved a measurable signal that you can actually observe.

For AI-optimized content, that means checking:

- Did the AI-related crawler return to the page after the update?

- Did citation rate change within the tracking window?

- Did AI-origin referral traffic shift?

- Can that shift be attributed to this page and this change?

If you cannot answer those questions, the optimization is hypothetical.

The workflow described here is designed to make attribution possible. It does not guarantee an outcome, but it does make it easier to see why outcomes changed. That is the difference between a GEO program and a GEO experiment log.

Frequently Asked Questions

What is the most important change I can make to an existing page?

Lead with the answer in the first sentence. If the page buries its core claim, no structural improvement downstream will fully compensate.

Do I need to rewrite every page at once?

No. A prioritized set of 5 to 10 pages gives you enough signal to learn from without overspending rewrite budget upfront.

Does adding schema markup directly improve AI citations?

Not directly. Schema improves entity clarity and content classification, which reduces the inference cost for retrieval systems. That is a precondition for citation, not a guarantee.

How long before I see citation changes after a structural rewrite?

It depends on crawl frequency and the surface you are targeting. Faster AI crawlers may revisit within days. Some citation changes take several weeks to show up in measurable volume.

What if my page already has good structure?

Then the next move is to improve factual density by replacing vague claims with specific ones and adding named-source attribution to each important claim.

Should I update pages or create new ones?

Start with updates. New pages start without crawl history, citation signal, or internal linking weight. Improving high-value existing pages is usually the faster path to measurable AI visibility change.

Want the measurement layer in place before you rewrite more pages?

Set up your AI visibility measurement stack →

Sources

- Aggarwal et al., "GEO: Generative Engine Optimization", arXiv, November 2023.

- Google Search Central, "Understand how structured data works", accessed March 2026.

- Google Search Central, "Introduction to robots.txt", accessed March 2026.

- Perplexity AI, "How does Perplexity work?", accessed March 2026.